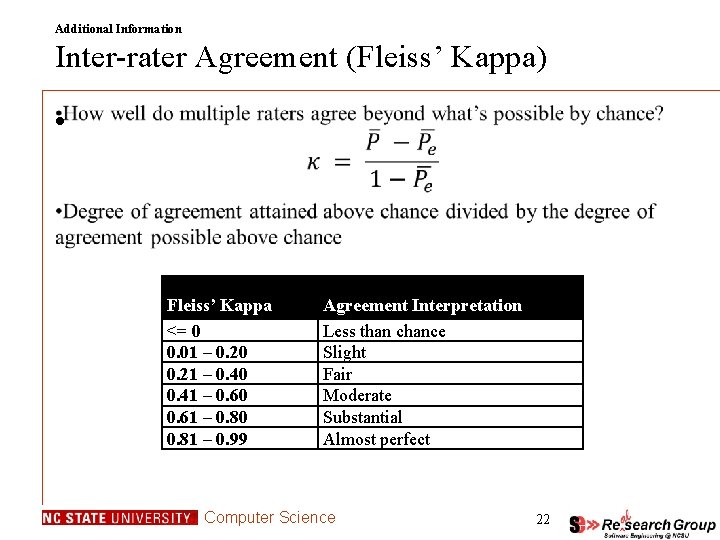

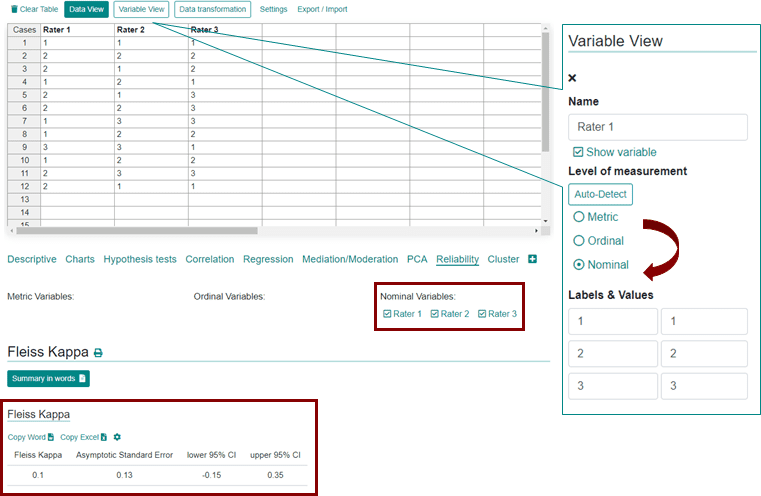

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

PLOS ONE: Standardization for Ki-67 Assessment in Moderately Differentiated Breast Cancer. A Retrospective Analysis of the SAKK 28/12 Study

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

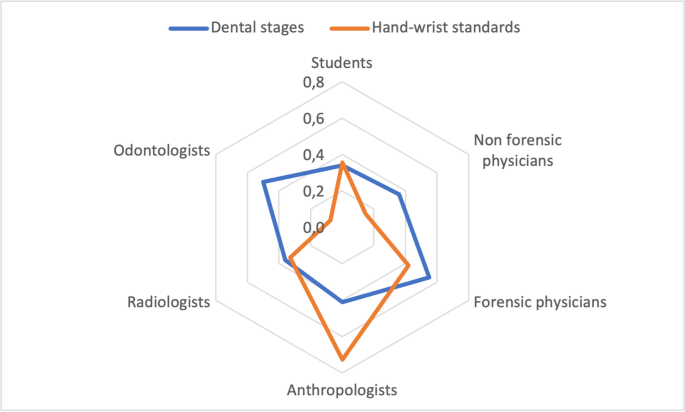

Analysis of interrater reliability in age assessment of minors: how does expertise influence the evaluation? | SpringerLink

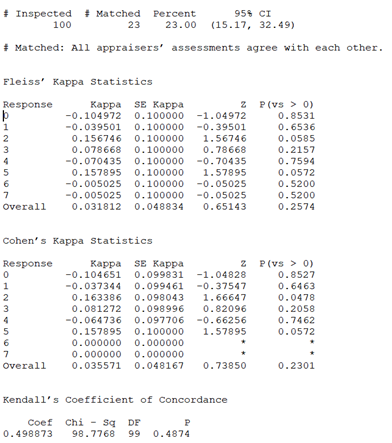

Kappa Value/ Kendall's Coefficient - We ask and you answer! The best answer wins. - Benchmark Six Sigma Forum

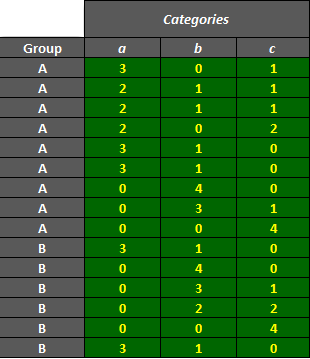

![PDF] Fuzzy Fleiss-kappa for Comparison of Fuzzy Classifiers | Semantic Scholar PDF] Fuzzy Fleiss-kappa for Comparison of Fuzzy Classifiers | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/8aa54d5299fb48d6a7355c766573ecb520a43393/3-Table1-1.png)

![PDF] Fuzzy Fleiss-kappa for Comparison of Fuzzy Classifiers | Semantic Scholar PDF] Fuzzy Fleiss-kappa for Comparison of Fuzzy Classifiers | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/8aa54d5299fb48d6a7355c766573ecb520a43393/5-Table3-1.png)

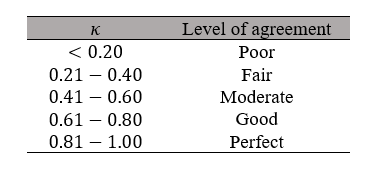

![Fleiss' Kappa and Inter rater agreement interpretation [24] | Download Table Fleiss' Kappa and Inter rater agreement interpretation [24] | Download Table](https://www.researchgate.net/profile/Vijay-Sarthy-Sreedhara/publication/281652142/figure/tbl3/AS:613853020819479@1523365373663/Fleiss-Kappa-and-Inter-rater-agreement-interpretation-24.png)